Digital Citizen

Disinformation - Online - Dangerous

Fact Checking and Embedded Links

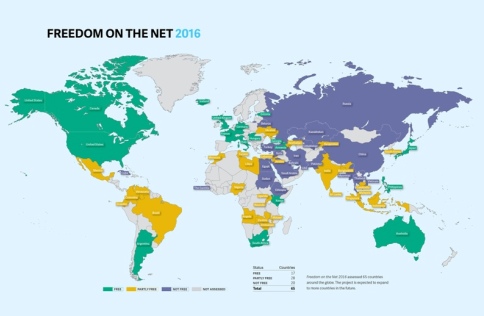

Privacy on the Net-Online Rights

Strategic Policy-Internet Online Rights

·································································

Each of us can make a positive difference

- Each of us can make a positive difference by stepping up & doing our best -- SJS/GreenPolicy360

- Video of Steve Jobs ... Speaking of Apple... People with passion can change the world for the better

···································································································

Artificial Intelligence Glossary: Neural Networks and Other Terms Explained

- The concepts and jargon you need to understand ChatGPT

A list of phrases and concepts useful to understanding artificial intelligence, in particular the new breed of A.I.-enabled chatbots like ChatGPT, Bing and Bard

Bing and Bard chatbots are being rolled out slowly, and you may need to get on their waiting lists for access. ChatGPT currently has no waiting list, but it requires setting up a free account.

Anthropomorphism: The tendency for people to attribute humanlike qualities or characteristics to an A.I. chatbot. For example, you may assume it is kind or cruel based on its answers, even though it is not capable of having emotions, or you may believe the A.I. is sentient because it is very good at mimicking human language.

Bias: A type of error that can occur in a large language model if its output is skewed by the model’s training data. For example, a model may associate specific traits or professions with a certain race or gender, leading to inaccurate predictions and offensive responses.

ChatGPT: ChatGPT, the artificial intelligence language model from a research lab, OpenAI, has been making headlines since November for its ability to respond to complex questions, write poetry, generate code, plan vacations and translate languages. GPT-4, the latest version introduced in mid-March, can even respond to images (and ace the Uniform Bar Exam).

Bing: Two months after ChatGPT’s debut, Microsoft, OpenAI’s primary investor and partner, added a similar chatbot, capable of having open-ended text conversations on virtually any topic, to its Bing internet search engine. But it was the bot’s occasionally inaccurate, misleading and weird responses that drew much of the attention after its release.

Bard: Google’s chatbot, called Bard, was released in March to a limited number of users in the United States and Britain. Originally conceived as a creative tool designed to draft emails and poems, it can generate ideas, write blog posts and answer questions with facts or opinions.

Ernie: The search giant Baidu unveiled China’s first major rival to ChatGPT in March. The debut of Ernie, short for Enhanced Representation through Knowledge Integration, turned out to be a flop after a promised “live” demonstration of the bot was revealed to have been recorded.

Emergent behavior: Unexpected or unintended abilities in a large language model, enabled by the model’s learning patterns and rules from its training data. For example, models that are trained on programming and coding sites can write new code. Other examples include creative abilities like composing poetry, music and fictional stories.

Generative A.I.: Technology that creates content — including text, images, video and computer code — by identifying patterns in large quantities of training data, and then creating original material that has similar characteristics. Examples include ChatGPT for text and DALL-E and Midjourney for images.

Hallucination: A well-known phenomenon in large language models, in which the system provides an answer that is factually incorrect, irrelevant or nonsensical, because of limitations in its training data and architecture.

Large language model: A type of neural network that learns skills — including generating prose, conducting conversations and writing computer code — by analyzing vast amounts of text from across the internet. The basic function is to predict the next word in a sequence, but these models have surprised experts by learning new abilities.

Natural language processing: Techniques used by large language models to understand and generate human language, including text classification and sentiment analysis. These methods often use a combination of machine learning algorithms, statistical models and linguistic rules.

Neural network: A mathematical system, modeled on the human brain, that learns skills by finding statistical patterns in data. It consists of layers of artificial neurons: The first layer receives the input data, and the last layer outputs the results. Even the experts who create neural networks don’t always understand what happens in between.

Parameters: Numerical values that define a large language model’s structure and behavior, like clues that help it guess what words come next. Systems like GPT-4 are thought to have hundreds of billions of parameters.

Reinforcement learning: A technique that teaches an A.I. model to find the best result by trial and error, receiving rewards or punishments from an algorithm based on its results. This system can be enhanced by humans giving feedback on its performance, in the form of ratings, corrections and suggestions.

Transformer model: A neural network architecture useful for understanding language that does not have to analyze words one at a time but can look at an entire sentence at once. This was an A.I. breakthrough, because it enabled models to understand context and long-term dependencies in language. Transformers use a technique called self-attention, which allows the model to focus on the particular words that are important in understanding the meaning of a sentence.

○

A Look into the 'Telegram' App

Via Wired

February 2022

Read the full story about the origins of Telegram

(article excerpts)

For years now, the world has fretted over Facebook's—now Meta's—seemingly inexorable dominance: its relentless neutralization of competitors either by acquisition or elimination; its subjugation of politics, culture, and every facet of intimate life to the priorities of an algorithm built for ad sales; its succession of escalating privacy scandals; and its record of disingenuous apologies when it gets caught. But over the past year or so, Mark Zuckerberg's empire has begun to look a little less invulnerable. Lawmakers have increasingly arrayed against it, and at brief moments—like the January 2021 mass exodus from WhatsApp, and a second one that followed a Facebook outage in October—the powerful network effects that drive Meta's supremacy have seemed to shift briefly into reverse. Somehow Telegram, with its tiny staff, has become one of the greatest beneficiaries of those stumbles.

Whether this is a good thing for the world is another question, one muddied by how poorly understood Telegram is, especially in the US. The vast majority of journalists still refer to it as an “encrypted messaging app.” This description unnerves many security experts, who warn that, unlike Signal or WhatsApp, Telegram is not end-to-end encrypted by default; that users must go out of their way to turn on the app's “secret chats” function (which few people actually do); and that only individual conversations, not those among groups, can be end-to-end encrypted. For the millions of people who use Telegram under repressive regimes, experts say, that confusion could be costly.

But the term “messaging app” is itself somewhat misleading, in ways that lead many to underestimate Telegram. Over the years, the app has become a deliberate hybrid of a messaging service and a social media platform—a rival not only to WhatsApp and Signal but also, increasingly, to Facebook itself. Users can join public or private channels with unlimited numbers of followers, where anyone can like, share, or comment. They can also join private groups with up to 200,000 members—a scale that dwarfs WhatsApp's 256-member limit. But unlike Facebook, at Telegram there is no targeted advertising and no algorithmic feed...

It's been vital to pro-democracy protesters from Belarus to Hong Kong, but the global right seems to find Telegram particularly congenial...

In interviews, (Telegram's Russian founder) Durov would depict Telegram as a distributed company, free of any one country's jurisdiction and security apparatus—and, above all, beyond the grip of Putin's Russia. He portrayed himself to the Times as an “exile,” a depiction that would go on to reappear in countless press accounts. The paper described him as a “nomad, moving from country to country every few weeks with a small band of computer programmers.” Durov's Instagram feed seemed to bear this out, with snapshots of glamorous hotels and landmarks in the places he stayed—in Beverly Hills, Paris, London, Rome, Venice, Bali, Helsinki...

In 2015 alone, Telegram's small team created a platform for users to create and publish their own chatbots; they added reply, mention, and hashtag functions to group chats; they added in-app video playback and a new photo editor; and, for the first time, they introduced public channels for those wanting to broadcast to an unlimited number of followers. Only Facebook, with its much larger staff, was adding features at a comparable rate...

In the world of social media, Telegram is a distinct oddity. Often rounding out lists of the world's 10 largest platforms, it has just around 30 core employees, had no source of ongoing revenue until very recently, and—in an era when tech firms face increasing pressure to quash hate speech and misinformation—exercises virtually no content moderation, except to take down illegal pornography and calls for violence. At Telegram it is an article of faith, and a marketing pitch, that the company's platform should be available to all, regardless of politics or ideology. “For us, Telegram is an idea,” Pavel Durov, Telegram's Russian founder, has said. “It is the idea that everyone on this planet has a right to be free.”

·························································································

NewsGuard targets Disinformation / Misinformation

··················································································

Hacking the 2016 US Presidential Election

Turning social media and database marketing into political persuasion

- An inside look at the U.S. 2020 presidential campaign - "The Great Hack"

- ················································

Political Marketing, Oppo Politics, Disinfo, Data Manipulation

"What happens when anyone can make it appear as if anything has happened, regardless of whether or not it did?"

Worse because of our ever-expanding computational prowess; worse because of ongoing advancements in artificial intelligence and machine learning that can blur the lines between fact and fiction; worse because those things could usher in a future where anyone could make it “appear as if anything has happened, regardless of whether or not it did.”

················································································

·································································

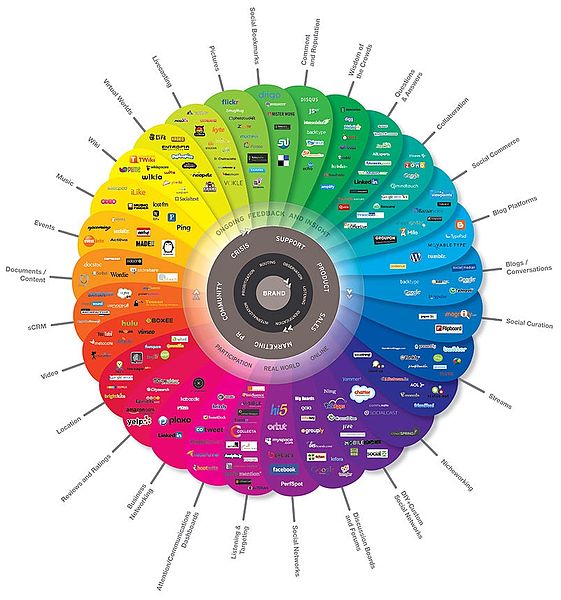

Digital Citizens

Social Media

·································································

Online Education

································································

Visit the International Society for Technology in Education (ISTE)

Educational technology is today's challenge

Goal:

To empower educators and parents to create a healthy digital culture at school and home.

To refocus conversations around digital citizenship towards practical approaches that help educators and parents support young people in a highly digital world.

- ·····································

·····························································································

Digital ....

A

Alliance for an Affordable Internet - http://a4ai.org/

American Principles Project - http://www.americanprinciplesproject.org/blog/congress-investigates-big-data-and-increasing-loss-of-student-privacy

Atlantic - http://www.theatlantic.com/politics/archive/2014/07/a-new-surveillance-whistleblower-emerges/374722/

B

BBC - http://news.bbc.co.uk/2/hi/8548190.stm

BSA/The Software Alliance - http://www.bsa.org/

D

Daily Dot - http://www.dailydot.com/technology/virtru-email-encryption-android-app/

Digital Bill of Rights - http://jameslosey.com/post/79356515492/an-overview-of-calls-for-rights-or-principles-for-the

Digital Rights at Wikipedia - http://en.wikipedia.org/wiki/Digital_rights

E

Esquire - http://www.esquire.com/blogs/news/winning-the-war-on-info

F

Fast Company Labs - http://www.fastcolabs.com/3014238/tracking/lots-of-people-can-read-your-private-chats-not-just-the-nsa

Freedom Online Coalition - http://www.freedomonline.ee/

G

Ghostery - https://www.ghostery.com/en/

Global Network Initiative - https://globalnetworkinitiative.org/

- Principles on Freedom of Expression and Privacy - https://globalnetworkinitiative.org//principles/index.php

Guardian - http://www.theguardian.com/technology/2014/jun/20/little-privacy-in-the-age-of-big-data

H

Hackers News Bulletin - http://www.hackersnewsbulletin.com/2014/07/nsa-tracking-every-tor-user.html

Hunton Privacy Blog - https://www.huntonprivacyblog.com/

I

Inside Counsel - http://www.insidecounsel.com/2014/06/20/whos-mining-the-store-big-data-brokers-and-the-ris

Internet Governance Project - http://www.internetgovernance.org/

Internet Privacy at Wikipedia - http://en.wikipedia.org/wiki/Internet_privacy

L

LinkIs – You Leave a Trail w/ Everything You Do Online - http://linkis.com/youtu.be/bxPZU (online video)

M/N

New America Foundation/Kevin Bankston - http://newamerica.net/user/602

NPR/On Point - http://onpoint.wbur.org/2014/01/08/nsa-cryptography-quantum

O

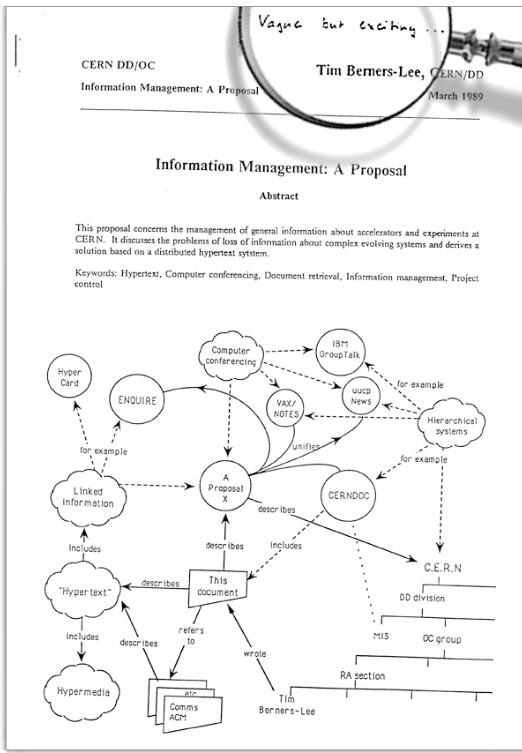

Online Magna Carta - http://www.theguardian.com/technology/2014/mar/12/online-magna-carta-berners-lee-web

Online Publishers Association - http://www.online-publishers.org/

Open Access Overview - http://legacy.earlham.edu/~peters/fos/overview.htm

Open Architecture Network - http://openarchitecturenetwork.org/

Open Internet Tools Project https://openitp.org/

Open Rights Group - https://www.openrightsgroup.org/

Open Technology Institute - http://oti.newamerica.net/

P

PBS - www.pbs.org/newshour/bb/data-brokers-really-know-us

PC World - www.pcworld.com/article/2366165/7-in-10-concerned-about-security-of-internet-of-things.html

Pew Institute - http://www.pewinternet.org/2014/07/03/net-threats/

ProPublica - http://www.propublica.org/article/everything-we-know-about-what-data-brokers-know-about-you

Q/R

Reader Supported News - http://www.readersupportednews.org/opinion2/277-75/23560-taking-on-americas-runaway-surveillance-state

Reform Government Surveillance - https://www.reformgovernmentsurveillance.com/

S

Save the Net - http://www.savetheinternet.com/about-sti

SearchNet Networking - http://www.searchnetworking.techtarget.com/opinion/Big-data-fail-Network-security-monitoring-wont-get-you-too-far

Section 215/"Patriot Act" - https://www.aclu.org/free-speech-national-security-technology-and-liberty/reform-patriot-act-section-215

Section 702/"FISA" - http://fas.org/irp/news/2013/06/nsa-sect702.pdf

Social Concept Consulting - http://www.socialconceptsconsulting.com/google-internet-privacy

T

ThinkProgress - http://www.thinkprogress.org/culture/2014/04/29/3432050/can-you-hide-from-big-data

U

UN / The Right to Privacy in the Digital Age-June 2014 - http://www.ohchr.org/EN/Issues/DigitalAge/Pages/DigitalAgeIndex.aspx

UN / United Nations Promotion and protection of human rights and fundamental freedoms while countering terrorism - https://firstlook.org/theintercept/2014/10/15/un-investigator-report-condemns-mass-surveillance/

V/W

VentureBeat - http://venturebeat.com/2014/07/20/tired-of-being-spied-on-these-startups-try-to-keep-your-secrets-safe/

Verified Voting - https://verifiedvoting.org/

Vox - http://www.vox.com/2014/7/9/5880403/13-ways-the-nsa-spies-on-us

Web We Want - https://webwewant.org/

Wim Says – If You’re Not Paying for It, then You’re the Product - http://www.wimrampen.com/2014/03/16/big-data-trust-and-you-as-the-product/

Wired - http://www.wired.com/2014/08/how-to-save-the-net/ How to Save the Net - http://www.wired.com/2014/08/save-the-net-vinton-cerf/

Wired - http://www.wired.com/2011/06/internet-a-human-right/

○

Digital Citizen & Digital Rights Movement

_____________________________________________________________________

______________________________

GreenPolicy360 Digital.Links

Links via GreenPolicy's Digital360 Network

- Access Now ('Digital Human Rights') - https://www.accessnow.org/

- Alliance for Affordable Internet - http://a4ai.org/

- ArcGIS Open Data Platform - https://opendata.arcgis.com/about

- ASPI Cyber Policy - http://cyberpolicy.aspi.org.au/

- Center for Data Innovation - http://www.datainnovation.org/ - http://www.datainnovation.org/2014/04/the-economic-impact-of-open-data/

- Center for Democracy and Technology - https://cdt.org/

- (FB) https://www.facebook.com/CenDemTech

- (TW) https://twitter.com/CenDemTech

- (Wiki) http://en.wikipedia.org/wiki/Center_for_Democracy_and_Technology

- Citizen Lab - https://citizenlab.ca/

- Coalition for a Safer Web - https://coalitionsw.org/media/

- Code for America - http://codeforamerica.org/issues/openness/

- CommonFutures EU/Int'l - http://CommonFutures.eu/the-future-of-open-possibly-the-largest-open-network-in-the-world

- Creative Commons - http://CreativeCommons.org

- Cybersecurity for Democracy - https://cybersecurityfordemocracy.org/

- Tools to increase researchers ability to study online platforms

- Data.gov, US Government open data - http://www.data.gov/

- 124K+ data sets as of 2015 - http://www.data.gov/metrics

- EcoInforma http://www.data.gov/ecosystems/ecoinforma/

- Dbpedia - http://dbpedia.org/About

- Decent Security - http://www.decentsecurity.com/blog/

- Digital Bill of Rights - http://jameslosey.com/post/79356515492/an-overview-of-calls-for-rights-or-principles-for-the

- Digital Citizens Alliance - https://www.digitalcitizensalliance.org/

- E Pluribus Unum, Dispatches on Open Government and Technology - http://e-pluribusunum.org/

- Electronic Frontier Foundation - https://www.eff.org/

- (RSS) https://www.eff.org/rss

- (Wiki) http://en.wikipedia.org/wiki/Electronic_Frontier_Foundation

- (Parker Higgins-EFF) https://twitter.com/xor

- (recommended by EFF)

- Electronic Privacy Information Center (EPIC) - https://www.epic.org/

- Energy and Environment Legislation Tracking Database (US) - http://NCSL.org/issues-research/energyhome/energy-environment-legislation-tracking-database.aspx

- eQualit.ie - https://equalit.ie/equalit-ie-manifesto/

- Advancing the International Bill of Human Rights - http://www.ohchr.org/Documents/Publications/Compilation1.1en.pdf

- Fact-Checking Projects

- http://www.poynter.org/2017/there-are-now-114-fact-checking-initiatives-in-47-countries/450477/

- http://www.poynter.org/2016/there-are-96-fact-checking-projects-in-37-countries-new-census-finds/396256/

- Beginning with PolitiFact, the original project at Poynter Institute in St. Petersburg, Florida - https://en.wikipedia.org/wiki/PolitiFact.com

- http://www.poynter.org/about-the-international-fact-checking-network/

- http://reporterslab.org/fact-checking/

- http://reporterslab.org/category/fact-checking/#article-1384

- http://reporterslab.org/global-fact-checking-up-50-percent/

- Fight for the Future - http://www.fightforthefuture.org/

- Free Software Foundation / GNU - http://www.fsf.org/ - https://www.gnu.org/home.en.html

- Freedom of Information Requests/Government Attic (US) - http://GovernmentAttic.org

- Freedom of the Press Foundation - https://freedom.press/ - https://freedom.press/about/board

- (TW) https://twitter.com/FreedomofPress

- (FreedomPress Organizations) https://freedom.press/organizations

- Freedom Online Coalition - http://www.freedomonline.ee/ - http://www.freedomonline.ee/about-us/Freedom-online-coalition

- Freenet - https://freenetproject.org/

- Fundar - http://fundar.org.mx/

- Future of the Internet (blog) - http://futureoftheinternet.org/blog/

- General Data Protection Regulation (GDPR) - https://en.wikipedia.org/wiki/General_Data_Protection_Regulation

- Github and Open Data update - https://github.com/project-open-data - http://m.theatlantic.com/technology/archive/2014/02/catch-my-diff-githubs-new-feature-means-big-things-for-open-data/283673/

- Global Digital Citizen Foundation - https://globaldigitalcitizen.org/

- Global Network Initiative - https://globalnetworkinitiative.org/

- Principles on Freedom of Expression and Privacy - https://globalnetworkinitiative.org//principles/index.php

- https://globalnetworkinitiative.org/sites/default/files/GNI_-_Principles_1_.pdf

- Global Open Data Initiative - http://GlobalOpenDataInitiative.org

- Global Partners - http://www.global-partners.co.uk/

- Global Project Against Hate and Extremism - https://www.inach.net/global-project-against-hate-and-extremism/

- Global Voices - http://globalvoicesonline.org/-/topics/digital-activism/

- GNU / Free Software Foundation - https://www.gnu.org/home.en.html - http://www.fsf.org/

- GNU Project - https://en.wikipedia.org/wiki/GNU_Project

- Government Accountability Project - http://www.whistleblower.org/

- Government Lab - http://thegovlab.org/

- GovFuturesLab - Reimagining governance for an age of planetary challenges and human responsibility

- (FB) https://Facebook.com/govfutures

- App4Gov (TW) https://Twitter.com/App4Gov

- GovLoop (US) training/social media/open gov - https://www.govloop.com/

- GovTrack - https://www.Govtrack.us/

- GreenPolicy360 / Digital Citizen - http://www.greenpolicy360.net/w/Category:Digital_Citizen

- Information Technology and Innovation Foundation - http://www.itif.org/

- Institute for the Future - http://iftf.org/govfutures

- Internet Archive - Wayback Machine - https://archive.org/

- Internet.org "Connecting the World" - https://info.internet.org/en/

- Internet Bill of Rights / Internet Rights and Principles Coalition - http://internetrightsandprinciples.org/site/

- Internet Freedom Festival (IFF)

- Internet Governance Project - http://www.internetgovernance.org/

- Internet Society - http://www.internetsociety.org/who-we-are/mission

- IT for Change (India) - http://www.itforchange.net/

- Linked Data - http://linkeddata.org/

- Mobile Commons - https://www.mobilecommons.com/about/

- Mozilla Foundation Webmaker Tools - https://webmaker.org/en-US/tools

- Muckrock News - https://www.muckrock.com/news/

- New Media Rights - http://www.newmediarights.org/

- News Integrity Initiative - https://www.journalism.cuny.edu/2017/04/announcing-the-new-integrity-initiative/

- Online Magna Carta - http://www.theguardian.com/technology/2014/mar/12/online-magna-carta-berners-lee-web [1]

- Open Access Publishing - http://en.wikipedia.org/wiki/Open_access

- Open Congress (U.S.) - https://www.opencongress.org/

- Open Data Institute UK-Int'l - http://TheODI.org/about-us - http://TheGuardian.com/public-leaders-network/2013/oct/22/open-data-institute-summit-gavin-starks

- Open Data movement_Wikipedia - http://en.wikipedia.org/wiki/Open_Data

- Open Data Nation - http://www.opendatanation.com/ ("Smart Cities and Small Businesses")

- Open Data Research Network - http://opendataresearch.org/

- http://opendataresearch.org/emergingimpacts

- http://opendataresearch.org/content/2014/646/towards-common-methods-assessing-open-data-workshop-report

- Open Data - World Bank - http://data.worldbank.org/

- OpenDemocracy - https://www.opendemocracy.net/about

- https://www.opendemocracy.net/digitaliberties

- https://www.opendemocracy.net/openglobalrights

- (TW) https://twitter.com/opendemocracy

- Open Government Initiative (US) - http://www.whitehouse.gov/open

- Open Government in Practice - https://openlibrary.org/books/OL24435672M/Open_Government

- Open Government Partnership - International - http://OpenGovPartnership.org

- Open Institute - http://openinstitute.com/

- Open Knowledge Foundation Lab - http://okfn.org/

- (Blog) PublicBodies.org - http://blog.okfn.org/2013/07/09/introducing-open-knowledge-foundation-labs/

- Open Rights Group - https://www.openrightsgroup.org/

- Open Science - http://opensciencefederation.com/

- Open Secrets/Center for Responsive Politics - http://www.opensecrets.org/

- Open Source @Wikipedia - https://en.wikipedia.org/wiki/Open_source

- Open Source Ecology - http://opensourceecology.org/ - http://opensourceecology.org/gvcs/

- Open Source Hardware (wiki) - http://en.wikipedia.org/wiki/Open_source_hardware

- Open Standards Principles (UK) - https://www.gov.uk/government/publications/open-standards-principles/open-standards-principles

- Open State Foundation - http://www.openstate.eu/

- Open Technology Institute/New America Foundation - http://newamerica.org/oti/

- OpenGov Foundation - http://OpenGovFoundation.org

- OpenGov Hub - https://twitter.com/OpenGovHub

- OpenMedia International - https://openmedia.org/

- OpenNet Initiative - http://cyber.law.harvard.edu/research/opennet

- Peer 2 Peer-P2P Foundation - http://p2pfoundation.net/

- Personal Democracy Forum/Media - https://personaldemocracy.com/

- Civic Hall - http://civichall.org/

- Piwik analytics - http://piwik.org/

- Privacy Rights in the Digital Age (Published 2016)

- Project on Government Oversight - http://www.pogo.org/

- PublicAgenda - http://PublicAgenda.org

- Public Resource Org ("Making Government Information More Accessible") - https://public.resource.org/

- RDF Standards - http://W3.org/RDF

- Reform Government Surveillance - https://www.reformgovernmentsurveillance.com/

- Research Data Sharing without Barriers - https://RD-Alliance.org

- Reset the Net - https://www.resetthenet.org/

- SafeGov - http://www.safegov.org/

- Save Internet Privacy - https://www.saveinternetprivacy.org

- Save the Internet (US) - http://www.savetheinternet.com/sti-home

- Schneier on Security - https://www.schneier.com/about.html

- Software Freedom Law Center - https://www.softwarefreedom.org/

- SpiderOak - https://spideroak.com/

- State Decoded - http://StateDecoded.com

- Sunlight Foundation - http://sunlightfoundation.com/

- (FB) https://www.facebook.com/sunlightfoundation

- (G+) https://plus.google.com/+sunlightfoundation/posts

- (TW) https://twitter.com/sunfoundation

- Measuring the Impact of Open Data

- Surveillance Studies Centre - http://www.sscqueens.org/ - http://www.surveillance-studies.net/

- The Forum on Information and Democracy - https://informationdemocracy.org/

- The Constituent (US) - https://The-Constituent.com

- Thoughtworks - http://www.thoughtworks.com/

- Truthiness Collaborative - https://www.annenberglab.com/projects/truthiness-collaborative

- UN/United Nations Promotion and protection of human rights and fundamental freedoms while countering terrorism - https://firstlook.org/theintercept/2014/10/15/un-investigator-report-condemns-mass-surveillance/

- US Gov-EPA Databases - http://Water.EPA.gov/aboutow/owow/data.cfm

- VYPR VPN - http://www.goldenfrog.com/vyprvpn

- Web We Want (World Wide Web Foundation) - http://webfoundation.org/2013/12/announcing-the-web-we-want/ - https://webwewant.org/

- WebTAP / Web Transparency & Accountability Project (Princeton University) - https://webtap.princeton.edu/

- Wikipedia

- https://en.wikipedia.org/wiki/Wikipedia

- https://en.wikipedia.org/wiki/MediaWiki

- https://en.wikipedia.org/wiki/Wikimedia_Foundation

- Jimmy Wales / Founder - https://en.wikipedia.org/wiki/Jimmy_Wales

- Wikiversity - https://en.wikiversity.org/wiki/Wikiversity:Main_Page

- World Summit on the Information Society +10/ - http://en.wikipedia.org/wiki/World_Summit_on_the_Information_Society

- Worldwide Web Consortium - http://www.w3.org/

- WWW Origins-Foundation, est. by World Wide Web founder Tim Berners-Lee, "devoted to all people having access to the Web"

- WebFoundation.org -- "The Web Belongs to All of Us"

March 2017 Update Open Letter from Berners-Lee on Threats to the Web

1) "We’ve lost control of our personal data"; 2) "It’s too easy for misinformation to spread on the web"; 3) "Political advertising online needs transparency and understanding"

- We must work together with web companies to strike a balance that puts a fair level of data control back in the hands of people.

- We must fight against government over-reach in surveillance laws, including through the courts if necessary.

- We must push back against misinformation. We urgently need to close the “internet blind spot” in the regulation of political campaigning.